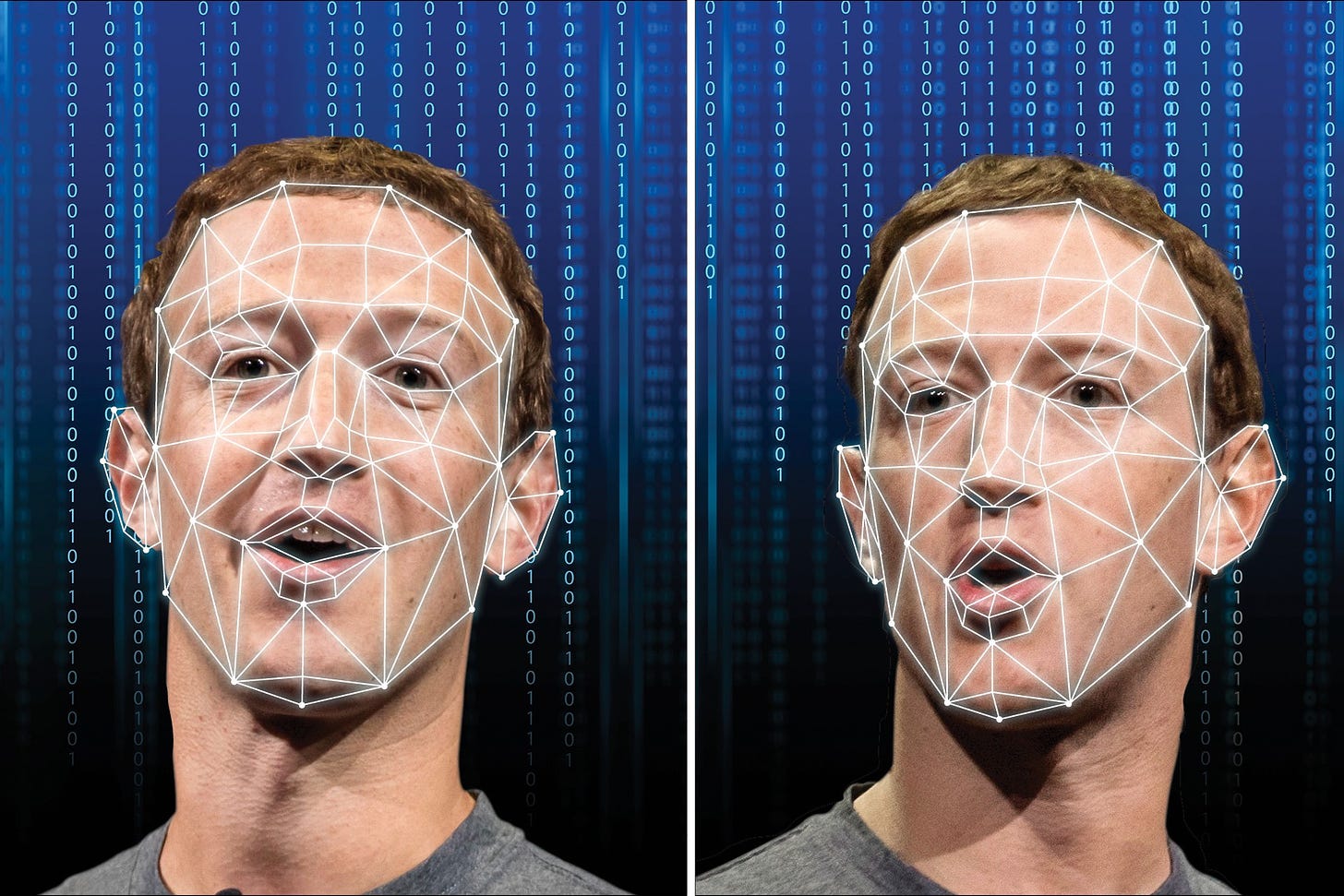

AI fake-face generators can be rewound to reveal the real faces they trained on

Researchers are calling into doubt the popular idea that deep-learning models are “black boxes” that reveal nothing about what goes on inside

How AI is reinventing what computers are

Every year, right on cue, Apple, Samsung, Google, and others drop their latest releases. These fixtures in the consumer tech calendar no longer inspire the surprise and wonder of those heady early days. But behind all the marketing glitz, there’s something remarkable going on.

Google’s latest offering, the Pixel 6, is the first phone to have a separate chip dedicated to AI that sits alongside its standard processor. And the chip that runs the iPhone has for the last couple of years contained what Apple calls a “neural engine,” also dedicated to AI. Both chips are better suited to the types of computations involved in training and running machine-learning models on our devices, such as the AI that powers your camera. Almost without our noticing, AI has become part of our day-to-day lives. And it’s changing how we think about computing.

This startup is creating personalized deepfakes for corporations

Major companies in India are using Rephrase.ai to create avatars of celebrities and executives, and commercial use is coming soon.

State of Data Science and Machine Learning 2021

Over 25,000 data scientists and ML engineers submitted responses on their backgrounds and day to day experience – everything from educational details to salaries to preferred technologies and techniques.

Peter Norvig: Today’s Most Pressing Questions in AI Are Human-Centered

The AI expert, who joins Stanford HAI as a Distinguished Education Fellow, discusses building inclusive education and broadening access to students.

A Stanford study of Japanese and U.S. Twitter users sheds light on why emotional posts are more likely to go viral

It happens all too often: You log on to social media for a fun break, but you find yourself riled up and angry an hour later. Why does this keep happening?

The Intelligence of Bodies

The philosophical and musical failings of “Beethoven X: The AI Project”

Predicting Spreadsheet Formulas from Semi-structured Contexts

A new model that learns to automatically generate formulas based on the rich context around a target cell.

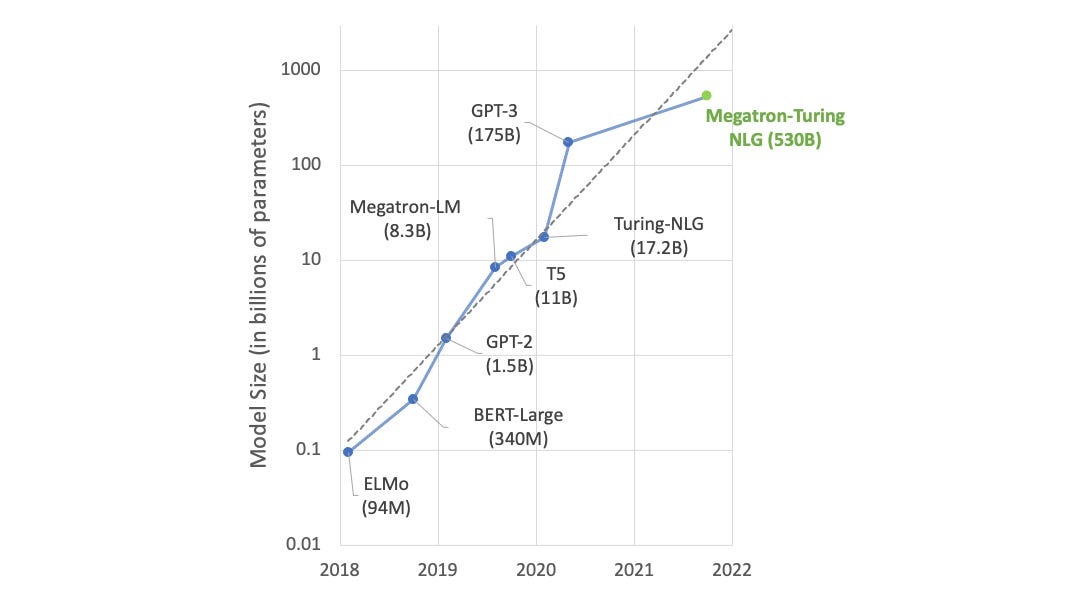

Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World’s Largest and Most Powerful Generative Language Model

DeepSpeed- and Megatron-powered Megatron-Turing Natural Language Generation model (MT-NLG) is the largest and the most powerful monolithic transformer language model trained to date, with 530 billion parameters. It is the result of a joint effort between Microsoft and NVIDIA to advance the state of the art in AI for natural language generation.

GPT-4 is listening to us now | Joscha Bach and Lex Fridman